By Kirt Zimmer, Head of Social Media Marketing, Marvell

The OFC 2025 event in San Francisco was so vast that it would be easy to miss a few stellar demos from your favorite optical networking companies. That’s why we took the time to create videos featuring the latest Marvell technology.

Put them all together and you have a wonderful film festival for technophiles. Enjoy!

We spoke with Kishore Atreya, Senior Director of Cloud Platform Marketing at Marvell, who discussed co-packaged optics. Instead of moving data via electrons, a light engine converts electrical signals into photons—unlocking ultra-high-speed, low-power optical data transfer.

The 1.6T and 6.4T light engines from Marvell can be integrated directly into the chip package, minimizing trace lengths, reducing power and enabling true plug-and-play fiber connectivity. It is flexible, scalable, and built for switching, XPUs, and beyond.

By Michael Kanellos, Head of Influencer Relations, Marvell

You’re likely assaulted daily with some zany and unverifiable AI factoid. By 2027, 93% of AI systems will be able to pass the bar, but limit their practice to simple slip and fall cases! Next-generation training models will consume more energy than all Panera outlets combined! etc. etc.

What can you trust? The stats below. Scouring the internet (and leaning heavily on 16 years of employment in the energy industry) I’ve compiled a list of somewhat credible and relevant stats that provide perspective to the energy challenge.

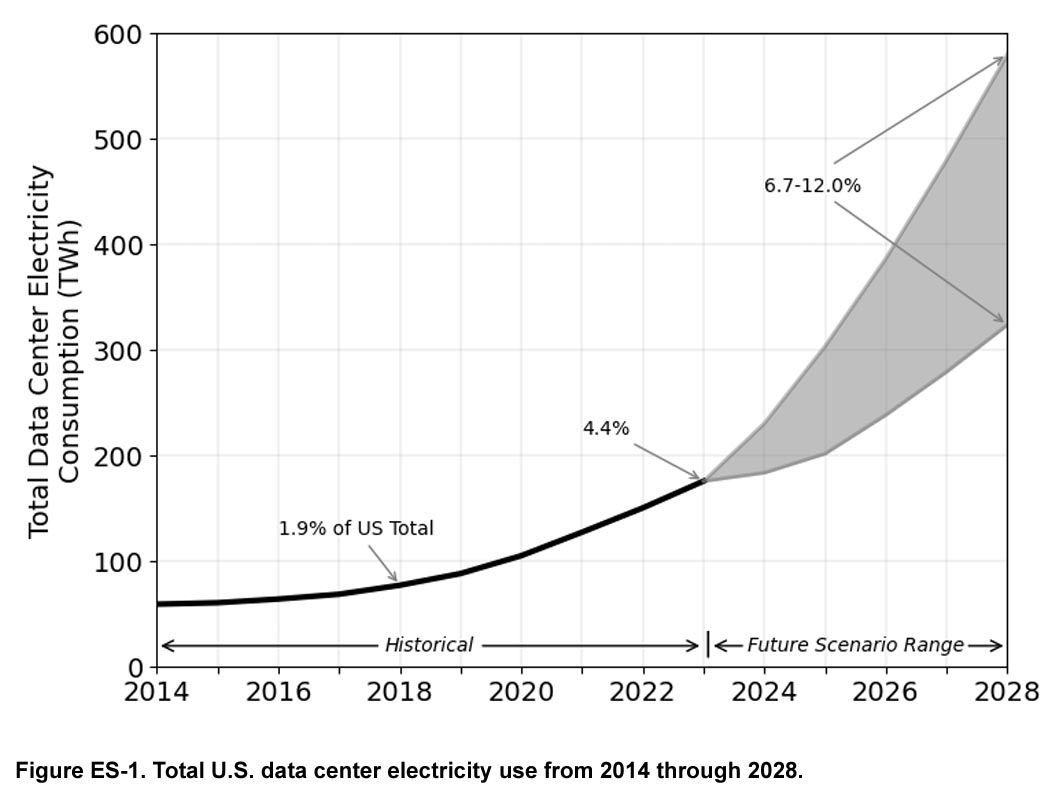

1. First, the Concerning News: Data Center Demand Could Nearly Triple in a Few Years

Lawrence Livermore National Lab and the Department of Energy1 has issued its latest data center power report and it’s ominous.

Data center power consumption rose from a stable 60-76 terawatt hours (TWh) per year in the U.S. through 2018 to 176 TWh in 2023, or from 1.9% of total power consumption to 4.4%. By 2028, AI could push it to 6.7%-12%. (Lighting consumes 15%2.)

Report co-author Eric Masanet adds that the total doesn’t include bitcoin, which increases 2023’s consumption by 70 TWh. Add a similar 30-40% to subsequent years too if you want.

This article was originally published in VentureBeat.

Artificial intelligence is about to face some serious growing pains.

Demand for AI services is exploding globally. Unfortunately, so is the challenge of delivering those services in an economical and sustainable manner. AI power demand is forecast to grow by 44.7% annually, a surge that will double data center power consumption to 857 terawatt hours in 20281: as a nation today, that would make data centers the sixth largest consumer of electricity, right behind Japan’s2 consumption. It’s an imbalance that threatens the “smaller, cheaper, faster” mantra that has driven every major trend in technology for the last 50 years.

It also doesn’t have to happen. Custom silicon—unique silicon optimized for specific use cases—is already demonstrating how we can continue to increase performance while cutting power even as Moore’s Law fades into history. Custom may account for 25% of AI accelerators (XPUs) by 20283 and that’s just one category of chips going custom.

The Data Infrastructure is the Computer

Jensen Huang’s vision for AI factories is apt. These coming AI data centers will churn at an unrelenting pace 24/7. And, like manufacturing facilities, their ultimate success or failure for service providers will be determined by operational excellence, the two-word phrase that rules manufacturing. Are we consuming more, or less, energy per token than our competitor? Why is mean time to failure rising? What’s the current operational equipment effectiveness (OEE)? In oil and chemicals, the end products sold to customers are indistinguishable commodities. Where they differ is in process design, leveraging distinct combinations of technologies to squeeze out marginal gains.

The same will occur in AI. Cloud operators already are engaged in differentiating their backbone facilities. Some have adopted optical switching to reduce energy and latency. Others have been more aggressive at developing their own custom CPUs. In 2010, the main difference between a million-square-foot hyperscale data center and a data center inside a regional office was size. Both were built around the same core storage devices, servers and switches. Going forward, diversity will rule, and the operators with the lowest cost, least downtime and ability to roll out new differentiating services and applications will become the favorite of businesses and consumers.

The best infrastructure, in short, will win.

The Custom Concept

And the chief way to differentiate infrastructure will be through custom infrastructure that are enabled by custom semiconductors, i.e., chips containing unique IP or features for achieving leapfrog performance for an application. It’s a spectrum ranging from AI accelerators built around distinct, singular design to a merchant chip containing additional custom IP, cores and firmware to optimize it for a particular software environment. While the focus is now primarily on higher value chips such as AI accelerators, every chip will get customized: Meta, for example, recently unveiled a custom NIC, a relatively unsung chip that connects servers to networks, to reduce the impact of downtime.

By Michael Kanellos, Head of Influencer Relations, Marvell

Computer architects have touted the performance and efficiency gains that can be achieved by replacing copper interconnects with optical technology in servers and processors for decades1.

With AI, it’s finally happening.

Marvell earlier this month announced that it will integrate co-packaged optics (CPO) technology into custom AI accelerators to improve the bandwidth, performance and efficiency of the chips powering AI training clusters and inference servers and opening the door to higher-performing scale-up servers.

The foundation of the offering is the Marvell 6.4Tbps 3D SiPho Engine announced in December 2023 and first demonstrated at OFC in March 2024. The 3D SiPho Engine effectively combines hundreds of components—drivers, transimpedance amplifiers, modulators, etc.—into a chiplet that itself becomes part of the XPU.

With CPO, XPUs will connect directly into an optical scale-up network, transmitting data further, faster, and with less energy per bit. LightCounting estimates that shipments of CPO-enabled ports in servers and other equipment will rise from a nominal number of shipments per year today to over 18 million by 20292.

Additionally, the bandwidth provided by CPO lets system architects think big. Instead of populating data centers with conventional servers containing four or eight XPUs, clouds can shift to systems sporting hundreds or even thousands of CPO-enhanced XPUs spread over multiple racks based around novel architectures—innovative meshes, torus networks—that can slash cost, latency and power. If supercomputers became clusters of standard servers in the 2000s, AI is shifting the pendulum back and turning servers into supercomputers again.

“It enables a huge diversity of parallelism schemes that were not possible with a smaller scale-up network domain,” wrote Dylan Patel of SemiAnalysis in a December article.

By Michael Kanellos, Head of Influencer Relations, Marvell

What happened in semis and accelerated infrastructure in 2024? Here is the recap:

1. Custom Controls the Future

Until relatively recently, computing performance was achieved by increasing transistor density à la Moore’s Law. In the future, it will be achieved through innovative design, and many of those innovative design ideas will come to market first—and mostly— through custom processors tailored to use cases, software environments and performance goals thanks to a convergence of unusual and unstoppable forces1 that quietly began years ago.

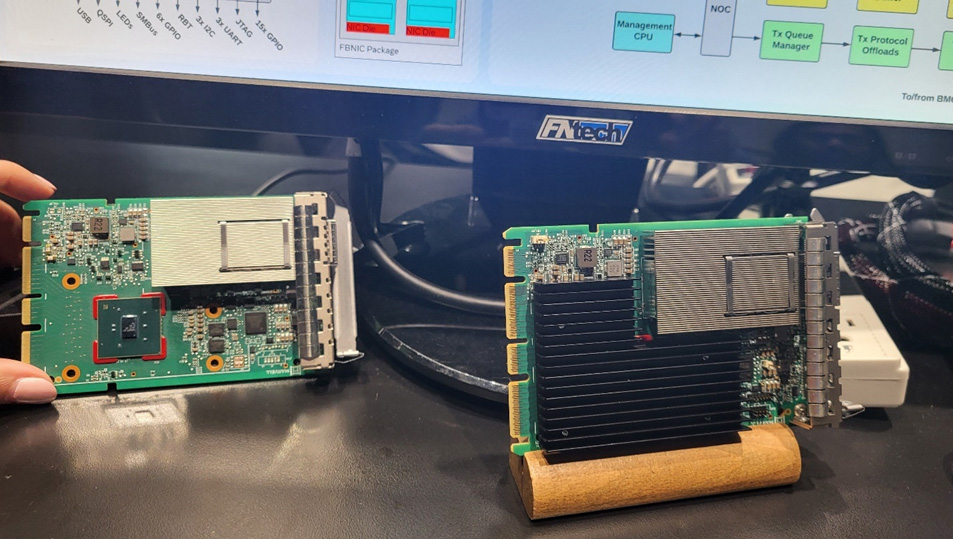

FB NIC on display at OFC