By Krishna Mallampati, Senior Director of Product Marketing, Data Center Switching, Marvell

Peripheral Component Interconnect Express (PCIe)® is the world’s most popular interconnect for connections between chips in a shared system, while ensuring low latency, and it is well suited to be deployed for the scale-up domain. Scale-up networks extend across racks and possess hundreds of processors; low latency and high bandwidth are required in these systems that make up the foundation of AI data centers.

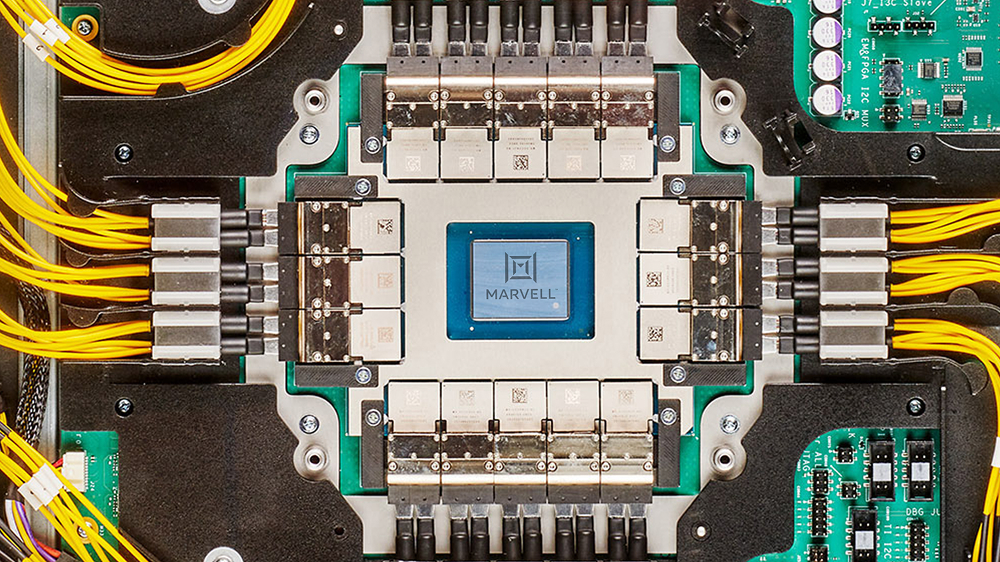

Marvell demonstrates the industry’s first 260-lane PCIe 6.0 switch in the video below, marking a new performance standard for PCIe scale-up performance—256 lanes of data traffic (plus four lanes for management) is the industry’s highest radix for a PCIe switch.

Traditional PCIe switch architectures require multiple devices to scale, racking up complexity and cost. However, the Marvell Structera S PCIe switch flattens the network and eliminates the need for multiple smaller switches in a large scale-up system. This enables higher density, lower latency and overall increased system efficiency, making it an optimal solution for hyperscale operators.

By Andrew Yick, Technical Associate Vice President, Operations Engineering, Marvell

This article was first published in Photonic Integrated Circuits magazine.

The dominant challenge in modern AI infrastructure is not just the performance of a single accelerator but scaling up to thousands of accelerators (XPUs) in a cluster. Training and inference workloads now depend on an interconnect that can stitch these accelerators into a high-bandwidth, low-latency system, where performance is governed as much by the network as by the compute itself.

As these systems scale, physics asserts itself. Electrical links over copper hit a practical ceiling as routing density and channel loss collide, turning the loss bandwidth product into an impassable constraint. The choice is binary: either move electrical-to-optical conversion closer to the Application-Specific Integrated Circuit (ASIC) or surrender the link budget. Thus, to bypass this electrical wall, optics must migrate from the board edge and onto the ASIC package.

By Uday Poosarla, Senior Director, Product Management, Photonic Fabric Business Unit, Marvell

Three critical constraints are shaping the evolution of AI infrastructure:

Together, these challenges point to a common problem: the increasing difficulty of moving data efficiently across AI systems, from compute to memory across the scale-up domain.

The Marvell® Photonic Fabric™ technology platform addresses these challenges by combining the advantages of optical interconnect with system-level design innovation.

By Michael Kanellos, Director of Content Marketing, and Vienna Alexander, Marketing Content Professional

At the International Semiconductor Industry Group (ISIG) Executive Summit in Silicon Valley, Marvell Senior Vice President of Foundry and Advanced Packaging Dr. Hamid Azimi received the 2026 Hall of Fame award. This recognition celebrates leaders whose lifetime contributions have had a lasting impact, significantly advancing the semiconductor industry.

Over a career spanning more than 30 years, Dr. Azimi has led teams that have achieved numerous industry-leading advances in packaging. He was part of the team that developed the first flip-chip packaging with Ajinomoto Build-Up Film (ABF) substrates, a combination that enables engineers to design packaging containing far more and much smaller interconnects to improve signal integrity and power flow. Hamid and his team also enabled EMIB (Embedded Multi-Chip Interconnect Bridge) technology from scratch to high volume manufacturing. EMIB is the first 2.5 interposer-less panel level technology that enables ultra-large packages for AI data center products; he also has been a pioneer in glass substrates, a potential technology that could further enhance the capability of future generations of devices. Originally from a small village that “probably doesn’t even register on Google Maps,” Dr. Azimi holds over 40 patents. He earned a Ph.D. and M.S. in Materials Science from Lehigh University and a B.S. in Materials Engineering from Sharif University of Technology.

By Preet Virk, Senior Vice President and General Manager, Photonic Fabric Business Unit

Modern AI infrastructure is built around multi-rack systems where thousands to tens of thousands of accelerators operate as a single logical compute element. As agentic AI and Mixture of Experts (MoE) models accelerate AI adoption, they are driving unprecedented scale and communication demands across data center infrastructure. These systems are connected by scale-up and scale-out networks that must deliver high bandwidth, low latency and efficient power. As these networks extend across racks, maintaining that performance becomes a primary challenge.

As AI systems grow in complexity and scale, the network becomes the backbone of the compute system. Large-scale clusters require massive XPU-to-XPU communication, driving an evolution beyond legacy protocols like PCIe® to encompass UALink™ (Ultra Accelerator Link), ESUN (Ethernet scale-up networking) and NVLink.

Meeting these requirements demands a new approach to connectivity. Marvell provides a comprehensive AI connectivity portfolio spanning scale-up, scale-out, scale-across and DCI (data center interconnect) network architectures. For scale-up networking, Marvell delivers copper and optical interconnects connecting XPUs, switches and memory. Within the rack, Marvell copper solutions provide low-latency, power-efficient short-reach connectivity, while Marvell optical interconnects enable high-performance scaling beyond the rack. This enables XPUs to operate as a more efficient, unified system as scale-up domains expand.