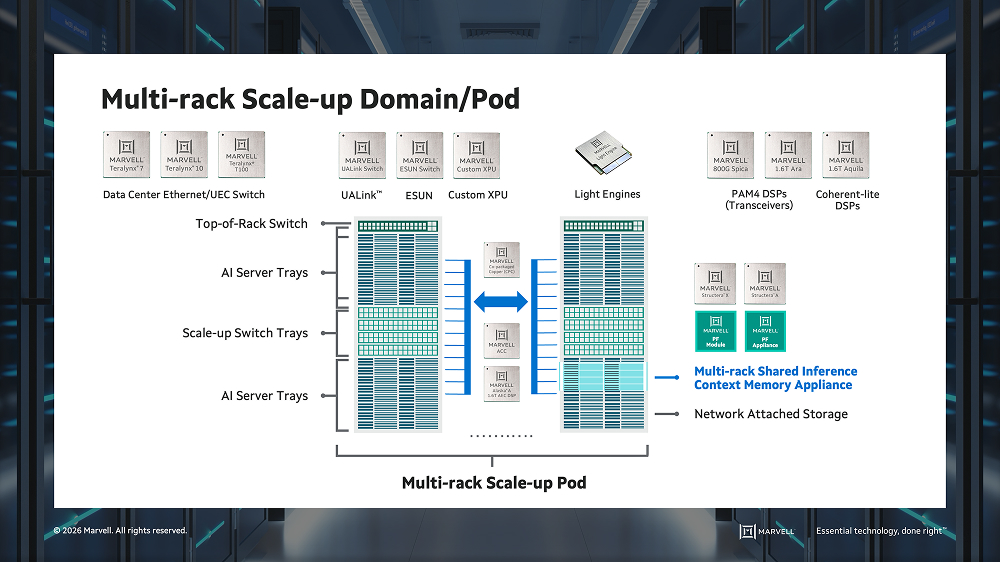

Modern AI infrastructure is built around multi-rack systems where thousands to tens of thousands of accelerators operate as a single logical compute element. As agentic AI and Mixture of Experts (MoE) models accelerate AI adoption, they are driving unprecedented scale and communication demands across data center infrastructure. These systems are connected by scale-up and scale-out networks that must deliver high bandwidth, low latency and efficient power. As these networks extend across racks, maintaining that performance becomes a primary challenge.

As AI systems grow in complexity and scale, the network becomes the backbone of the compute system. Large-scale clusters require massive XPU-to-XPU communication, driving an evolution beyond legacy protocols like PCIe® to encompass UALink™ (Ultra Accelerator Link), ESUN (Ethernet scale-up networking) and NVLink.

Meeting these requirements demands a new approach to connectivity. Marvell provides a comprehensive AI connectivity portfolio spanning scale-up, scale-out, scale-across and DCI (data center interconnect) network architectures. For scale-up networking, Marvell delivers copper and optical interconnects connecting XPUs, switches and memory. Within the rack, Marvell copper solutions provide low-latency, power-efficient short-reach connectivity, while Marvell optical interconnects enable high-performance scaling beyond the rack. This enables XPUs to operate as a more efficient, unified system as scale-up domains expand.

The Marvell Advantage: End-to-End Connectivity for Every Domain

Marvell brings over a decade of silicon photonics and optical connectivity experience, delivering end-to-end solutions with the expertise and portfolio required to support the full data center connectivity stack. Delivering this at scale requires deep integration across compute, interconnect switching and memory.

In the scale-up network domain, Marvell provides electrical and optical I/O (input / output) chiplets for the XPU and the switch.

Best-in-Class Interconnect Technologies

Marvell offers a diverse portfolio of connectivity choices, anchored by foundational SerDes leadership—ranging from production-proven 224G to state-of-the-art 448G technology.

CPC (Co-packaged Copper)

Optical Interconnects

With over a decade of experience shipping high volume silicon photonics solutions for both DCI and inside-DC, Marvell has unmatched expertise. The company is leveraging this proven maturity to lead the industry’s transition to high-performance NPO and CPO architectures.

NPO (Near-packaged Optics)

CPO (Co-packaged Optics)

Beyond Connectivity: Memory and Supply Chain

Built on Experience: Proven at Scale

In the world of high-speed analog and silicon photonics, experience is built over time, cannot be accelerated, and its importance cannot be overstated. The development of silicon photonics is defined by a steep and complex learning curve. With one of the industry's largest and most seasoned silicon photonics teams, Marvell brings over a decade of institutional knowledge that cannot be easily replicated by teams building these capabilities for the first time.

With expertise in SerDes, optics, and advanced packaging, Marvell has already addressed many of the challenges others are only beginning to encounter. AI systems require tight integration across multiple domains, from high-performance SerDes to optics and packaging, where design trade-offs and system-level constraints must be solved together. These challenges cannot be addressed by assembling teams or integrating third-party IP—they require years of experience and deep system-level expertise. Organizations with that expertise drive the best outcomes.

As AI infrastructure scales, no single interconnect technology can meet the demands of next-generation systems. Delivering performance at scale requires a proven continuum of connectivity spanning electrical and optical architectures, from die-to-die to co-packaged optics and data center fabrics. The ability to enable high-performance connectivity across the entire system will define the next era of computing.

With a 30-year history of innovation, Marvell is already engaged in active programs with the world’s leading hyperscalers, delivering next-generation AI data center solutions at scale. Marvell connects compute end to end—from inside the package to across data centers. That’s how AI scales.

# # #

This blog contains forward-looking statements within the meaning of the federal securities laws that involve risks and uncertainties. Forward-looking statements include, without limitation, any statement that may predict, forecast, indicate or imply future events or achievements. Actual events or results may differ materially from those contemplated in this blog. Forward-looking statements are only predictions and are subject to risks, uncertainties and assumptions that are difficult to predict, including those described in the “Risk Factors” section of our Annual Reports on Form 10-K, Quarterly Reports on Form 10-Q and other documents filed by us from time to time with the SEC. Forward-looking statements speak only as of the date they are made. Readers are cautioned not to put undue reliance on forward-looking statements, and no person assumes any obligation to update or revise any such forward-looking statements, whether as a result of new information, future events or otherwise.

Tags: AI infrastructure, Optical Interconnect, Optical DSPs, DSP, data center interconnect, hyperscale data center networks, Optical Connectivity, AI