By Robert Friend, Principal Engineer, Enterprise Network Marketing, Marvell

Multivendor environments have been the norm for decades to accelerate innovation, lower costs, and enable customers to optimize infrastructure with best-of-breed technologies.

But are there still hidden advantages going with a vertically integrated vendor?

Marvell, Dell and Cisco set out to answer this question in storage networking by submitting two SAN solutions to Tolly, a technical benchmarking service: the Dell PowerStore 32G Enterprise Storage Solution1 and the Dell PowerMax 64G Enterprise Storage Solution.2 The solutions combine Marvell® QLogic® 32G and 64G Fibre Channel HBAs with Dell PowerEdge 17G servers, Connectrix MDS Switches and Directors, as well as PowerStore and PowerMax storage.

Tolly found the systems delivered on seamless interoperability. More importantly, the technologies from the individual vendors such as highlighting the SAN congestion mitigation and comprehensive security.

Better together, in other words, is better. The set-up and the test results are below.

FPINs and Virtual Lanes Offer Interoperability and Compatibility

The solutions offer comprehensive 32/64G Fibre Channel (FC) interoperability, making the solution easy to adopt for storage networking applications in the data center.

The features are also plug-and-play compatible for seamless experience. The Marvell Fabric Performance Impact Notification frames (FPINs) and Virtual Lanes (VLs) for SAN endpoint connections, for example, pair well with Connectrix MDS’ Dynamic Ingress Rate Limiting (DIRL) inside the FC storage area network (SAN). Marvell HBAs uniquely offer these VLs, which are compatible with all FC switches, including solution partner Cisco.

By Chander Chadha, Director of Marketing, Flash Storage Products, Marvell

AI is all about dichotomies. Distinct computing architectures and processors have been developed for training and inference workloads. In the past two years, scale-up and scale-out networks have emerged.

Soon, the same will happen in storage.

The AI infrastructure need is prompting storage companies to develop SSDs, controllers, NAND and other technologies fine-tuned to support GPUs—with an emphasis on higher IOPS (input/output operations per second) for AI inference—that will be fundamentally different from those for CPU-connected drives where latency and capacity are the bigger focus points. This drive bifurcation also likely won’t be the last; expect to also see drives optimized for training or inference.

As in other technology markets, the changes are being driven by the rapid growth of AI and the equally rapidly growing need to boost the performance, efficiency and TCO of AI infrastructure. The total amount of SSD capacity inside data centers is expected to double to approximately 2 zettabytes by 2028 with the growth primary fueled by AI.1 By that year, SSDs will account for 41% of the installed base of data center drives, up from 25% in 2023.1

Greater storage capacity, however, also potentially means more storage network complexity, latency, and storage management overhead. It also means potentially more power. In 2023, SSDs accounted for 4 terawatt hours of data center power, or around 25% of the 16 TWh consumed by storage. By 2028, SSDs are slated to account for 11TWh, or 50%, of storage’s expected total for the year.1 While storage represents less than five percent of total data power consumption, the total remains large and provides incentives for saving. Reducing storage power by even 1 TWh, or less than 10%, would save enough electricity to power 90,000 US homes for a year.2 Finding the precise balance between capacity, speed, power and cost will be critical for both AI data center operators and customers. Creating different categories of technologies becomes the first step toward optimizing products in a way that will be scalable.

By Michael Kanellos, Head of Influencer Relations, Marvell

The opportunity for custom silicon isn’t just getting larger – it’s becoming more diverse.

At the Custom AI Investor Event, Marvell executives outlined how the push to advance accelerated infrastructure is driving surging demand for custom silicon – reshaping the customer base, product categories and underlying technologies. (Here is a link to the recording and presentation slides.)

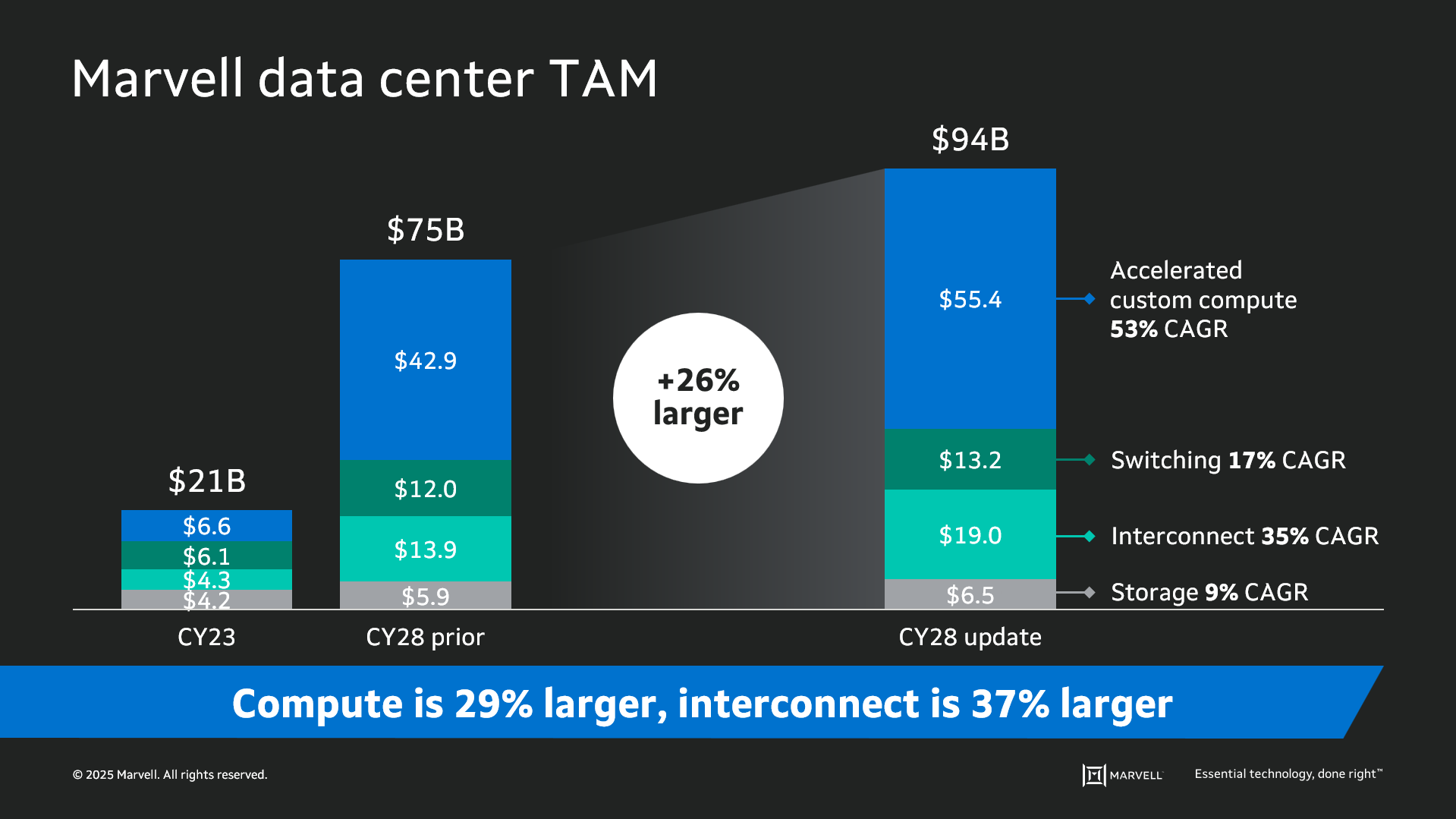

Data infrastructure spending is now slated to surpass $1 trillion by 20281 with the Marvell total addressable market (TAM) for data center semiconductors rising to $94 billion by then, 26% larger than the year before. Of that total, $55.4 billion revolves around custom devices for accelerated compute1. In fact, the forecast for every major product segment has risen in the past year, underscoring the growing momentum behind custom silicon.

The deeper you go into the numbers, the more compelling the story becomes. The custom market is evolving into two distinct elements: the XPU segment, focused on optimized CPUs and accelerators, and the XPU attach segment that includes PCIe retimers, co-processors, CPO components, CXL controllers and other devices that serve to increase the utilization and performance of the entire system. Meanwhile, the TAM for custom XPUs is expected to reach $40.8 billion by 2028, growing at a 47% CAGR1.

By Kristin Hehir, Senior Manager, PR and Marketing, Marvell

Powered by the Marvell® Bravera™ SC5 controller, Memblaze developed the PBlaze 7 7940 GEN5 SSD family, delivering an impressive 2.5 times the performance and 1.5 times the power efficiency compared to conventional PCIe 4.0 SSDs and ~55/9us read/write latency1. This makes the SSD ideal for business-critical applications and high-performance workloads like machine learning and cloud computing. In addition, Memblaze utilized the innovative sustainability features of Marvell’s Bravera SC5 controllers for greater resource efficiency, reduced environmental impact and streamlined development efforts and inventory management.

Powered by the Marvell® Bravera™ SC5 controller, Memblaze developed the PBlaze 7 7940 GEN5 SSD family, delivering an impressive 2.5 times the performance and 1.5 times the power efficiency compared to conventional PCIe 4.0 SSDs and ~55/9us read/write latency1. This makes the SSD ideal for business-critical applications and high-performance workloads like machine learning and cloud computing. In addition, Memblaze utilized the innovative sustainability features of Marvell’s Bravera SC5 controllers for greater resource efficiency, reduced environmental impact and streamlined development efforts and inventory management.By Todd Owens, Field Marketing Director, Marvell

While Fibre Channel (FC) has been around for a couple of decades now, the Fibre Channel industry continues to develop the technology in ways that keep it in the forefront of the data center for shared storage connectivity. Always a reliable technology, continued innovations in performance, security and manageability have made Fibre Channel I/O the go-to connectivity option for business-critical applications that leverage the most advanced shared storage arrays.

A recent development that highlights the progress and significance of Fibre Channel is Hewlett Packard Enterprise’s (HPE) recent announcement of their latest offering in their Storage as a Service (SaaS) lineup with 32Gb Fibre Channel connectivity. HPE GreenLake for Block Storage MP powered by HPE Alletra Storage MP hardware features a next-generation platform connected to the storage area network (SAN) using either traditional SCSI-based FC or NVMe over FC connectivity. This innovative solution not only provides customers with highly scalable capabilities but also delivers cloud-like management, allowing HPE customers to consume block storage any way they desire – own and manage, outsource management, or consume on demand. HPE GreenLake for Block Storage powered by Alletra Storage MP

HPE GreenLake for Block Storage powered by Alletra Storage MP

At launch, HPE is providing FC connectivity for this storage system to the host servers and supporting both FC-SCSI and native FC-NVMe. HPE plans to provide additional connectivity options in the future, but the fact they prioritized FC connectivity speaks volumes of the customer demand for mature, reliable, and low latency FC technology.