By Khurram Malik, Senior Director of Marketing, Custom Cloud Solutions, Marvell

Can AI beat a human at the game of twenty questions? Yes.

And can a server enhanced by CXL beat an AI server without it? Yes, and by a wide margin.

While CXL technology was originally developed for general-purpose cloud servers, the technology is now finding a home in AI as a vehicle for economically and efficiently boosting the performance of AI infrastructure. To this end, Marvell has been conducting benchmark tests on different AI use cases.

In December, Marvell, Samsung and Liqid showed how Marvell® StructeraTM A CXL compute accelerators can reduce the time required for conducting vector searches (for analyzing unstructured data within documents) by more than 5x.

In February, Marvell showed how a trio of Structera A CXL compute accelerators can process more queries per second than a cutting-edge server CPU and at a lower latency while leaving the host CPU open for different computing tasks.

Today, this blog post will show how Structera CXL memory expanders can boost performance of inference tasks.

By Khurram Malik, Senior Director of Marketing, Custom Cloud Solutions, Marvell

While CXL technology was originally developed for general-purpose cloud servers, it is now emerging as a key enabler for boosting the performance and ROI of AI infrastructure.

The logic is straightforward. Training and inference require rapid access to massive amounts of data. However, the memory channels on today’s XPUs and CPUs struggle to keep pace, creating the so-called “memory wall” that slows processing. CXL breaks this bottleneck by leveraging available PCIe ports to deliver additional memory bandwidth, expand memory capacity and, in some cases, integrate near-memory processors. As an added advantage, CXL provides these benefits at a lower cost and lower power profile than the usual way of adding more processors.

To showcase these benefits, Marvell conducted benchmark tests across multiple use cases to demonstrate how CXL technology can elevate AI performance.

By Michael Kanellos, Head of Influencer Relations, Marvell

This story was also featured in Electronic Design

Some technologies experience stunning breakthroughs every year. In memory, it can be decades between major milestones. Burroughs invented magnetic memory in 1952 so ENIAC wouldn’t lose time pulling data from punch cards1. In the 1970s DRAM replaced magnetic memory while in the 2010s, HBM arrived.

Compute Express Link (CXL) represents the next big step forward. CXL devices essentially take advantage of available PCIe interfaces to open an additional conduit that complements the overtaxed memory bus. More lanes, more data movement, more performance.

Additionally, and arguably more importantly, CXL will change how data centers are built, operate and work. It’s a technology that will have a ripple effect. Here are a few scenarios on how it can potentially impact infrastructure:

1. DLRM Gets Faster and More Efficient

Memory bandwidth—the amount of memory that can be transmitted from memory to a processor per second—has chronically been a bottleneck because processor performance increases far faster and more predictably than bus speed or bus capacity. To help contain that gap, designers have added more lanes or added co-processors.

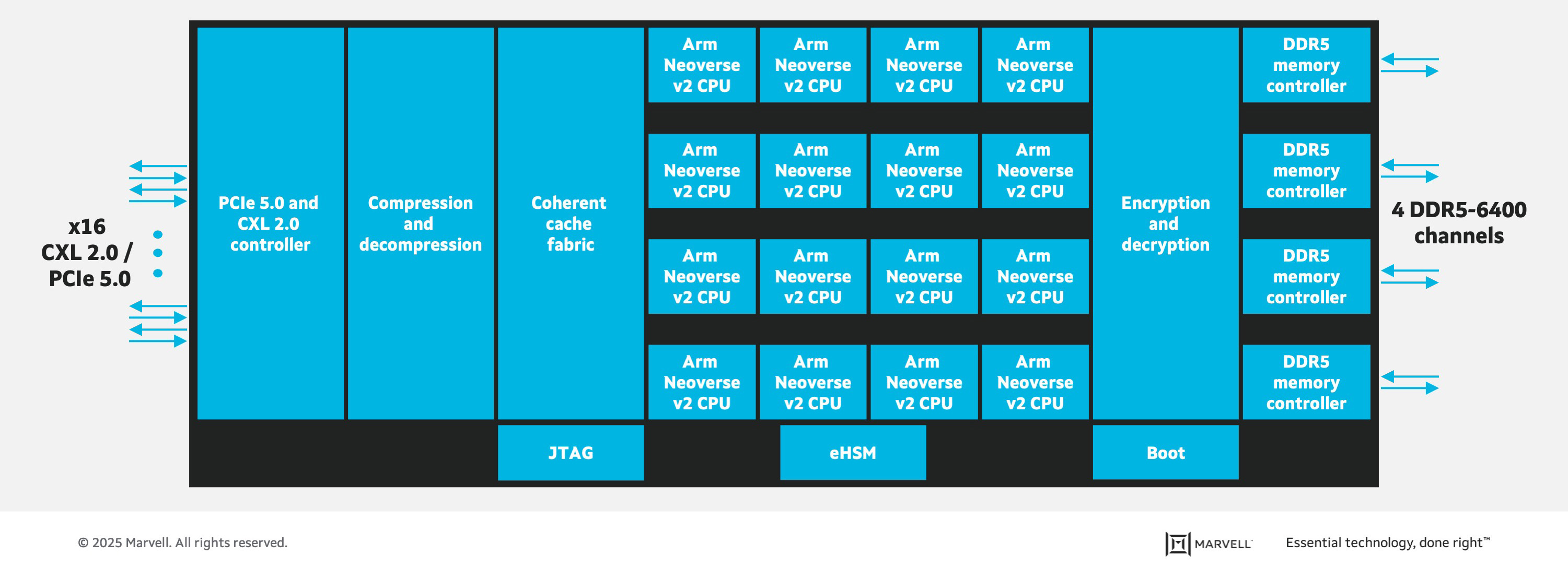

Marvell® StructeraTM A does both. The first-of-its-kind device in a new industry category of memory accelerators, Structera A sports 16 Arm Neoverse N2 cores, 200 Gbps of memory bandwidth, up to 4TB of memory and consumes under 100 watts along with processing fabric and other Marvell-only technology. It’s essentially a server-within-a-server with outsized memory bandwidth for bandwidth-intensive tasks like inference or deep learning recommendation models (DRLM). Cloud providers need to program their software to offload tasks to Structera A, but doing so brings a number of benefits.

Take a high-end x86 processor. Today it might sport 64 cores, 400 Gbps of memory bandwidth, up to 2TB of memory (i.e. four top-of-the-line 512GB DIMMs), and consume a maximum 400 watts for a data transmission power rate 1W per GB/sec.

By Lindsey Moore, Marketing Coordinator, Marvell

Flash Memory Summit, the industry's largest trade show dedicated to flash memory and solid-state storage technology, presented its 2020 Best of Show Awards yesterday in a virtual ceremony. Marvell, alongside Hewlett Packard Enterprise (HPE), was named a winner for "Most Innovative Flash Memory Technology" in the controller/system category for the Marvell NVMe RAID accelerator in the HPE OS Boot Device.

Last month, Marvell introduced the industry’s first native NVMe RAID 1 accelerator, a state-of-the-art technology for virtualized, multi-tenant cloud and enterprise data center environments which demand optimized reliability, efficiency, and performance. HPE is the first of Marvell's partners to support the new accelerator in the HPE NS204i-p NVMe OS Boot Device offered on select HPE ProLiant servers and HPE Apollo systems. The solution lowers data center total cost of ownership (TCO) by offloading RAID 1 processing from costly and precious server CPU resources, maximizing application processing performance.

Copyright © 2026 Marvell, All rights reserved.